Warren D. Smith. Feb 2014 & Oct 2015. SLIGHTLY INCOMPLETE.

Abstract. One way to design a multiwinner voting system is to define a "quality function" Q which examines the votes, voters, candidates, and winner-set, and outputs a number. Then the "optimum voting system" would simply be to choose the winner-set maximizing Q. We define an infinite number of quality functions whose maximization (we show) automatically yields "proportional representation" (PR). We then argue for both principled and practical reasons that the best among them is "Psi voting" in which the quality function is

Here Δ is a positive constant pre-chosen by the voting system designer (we recommend ½≤Δ≤1 and conjecturally Δ=½ is best), V is the number of voters, C is the number of candidates and W the number of winners (0<W<C), scores are assumed to lie in the real interval [0,1], and Ψ(x) denotes the digamma function. We then give algorithms implementing this. On a single 2.0GHz processor the run time to perform the optimization by brute force exhaustive search is about 4V·Binomial(C,W) nanoseconds. We then realize that the alternative quality function ("harmonic voting")

(where Sv,c is the score voter v awards candidate c) may yield even better voting systems! This, despite appearances, actually requires less computational work if 2C<V, because the Psi and Harmonic systems are related by a "Kotze-Pereira transformation."

We argue that it is NP-hard to determine the winner-set in "optimum PR" multiwinner elections – indeed even approximate optimality is NP-hard – and that when W is large all such elections become immensely vulnerable to "strategic voting." Therefore we only recommend these methods when W is small. We state various important properties that multiwinner voting systems could satisfy (our QPsi system satisfies all except UFC, CRC, SFC) then formulate what at the present time seems to be the most important theoretical open question about them.

This is part I of a multi-part paper with at least two parts.

One way to design a multiwinner voting system is to define a "quality function" which examines the votes, voters, candidates, and winner-set, and outputs a number. Then the "optimum voting system" would simply be to choose the winner-set yielding maximum quality.

But finding that best subset might be computationally infeasible if the number of candidates and winners is too large – e.g. with 50 candidates and 25 winners the number of possible winner-sets would be 50!/25!2=126410606437752≈1.26×1014 which, if we just employed the brute force approach of computing the quality-function for all of them, would take 4 years even at a rate of 106 sets per second. That is a different question, about computational complexity.

We shall begin by proposing a highly general class of possible quality functions, which immediately yields an infinity of new PR (proportional representation) multiwinner voting systems, albeit not necessarily having any computationally efficient implementation.

All our systems work most naturally with score-style ballots, in which each voter, on her ballot, rates each candidate on a fixed numerical scale (for example from 0=worst to 9=best). Also, none of our systems involve political parties in any way; they are solely candidate-based. For our systems it is irrelevant whether parties exist or not, or have various internal properties or not. This stands in stark contrast to "party list" PR systems (the most popular kind worldwide at the present time). Any voting system which inherently involves political parties and needs them to function will of necessity hurt independent candidates and presumably independent thinking itself. To quote professor Marcus Pivato (in email) about that:

Personally, I think political parties are a pernicious element of government. Ideally, we would dispense with them altogether. But probably, they are inevitable and a necessary evil. But in any case, their power should be minimized.Another problem is that parties can contain subfactions. For example, the USA Republican party as of year 2015 is really two parties masquerading as one, according to Warren Buffett in several media interviews. (In 2008, Buffett was the richest person in the world, and his father was a Republican congressman from Nebraska.) If PR is a good idea, shouldn't it also be a good idea for "effective" parties as well as "real" parties? You cannot get that effect with "party list" PR, but can with party-free PR schemes.

Among our infinity, there are reasons to argue that a particularly simple subtype we call the "psi PR" voting systems, are the "best of the best." But after happily reaching that verdict, the concept of the Kotze-Pereira transform of a voting system was shown to me by Toby Pereira. And there are reasons to believe that the Kotze-Pereira transform of Psi voting is better still. The resulting system enjoys a better combination of provable properties than all previous PR voting system proposals for electing committees... provided we have enough computational power to run the election.

We then will examine the computational complexity question and will produce enough evidence to generate the strong suspicion that efficient algorithms to find the optimum-quality winner-set are impossible! Namely, we have NP-hardness theorems plus results showing that for all our quality functions we expect the associated optimization problem to be highly nonconvex. Optimizing quality actually would be an entirely feasible "convex programming" problem if certain things were a little different, but they aren't, and demands for PR are the underlying reason they aren't. This all suggests that unless the number of C of candidates in the election is small enough so that the number C!/([C-W]!W!) of W-winner-subsets is small enough so that we can exhaustively examine all of them, "optimum proportional representation" is not going to be computationally feasible.

Intuitively speaking, this makes sense. There is a fundamental conflict involved in proportional representation. PR can force some country to elect MPs whom it individually does not like in comparison with other options. For example, the Nazi party might be popular with 2% of the population, but the other 98% hates them; with PR 2% of the seats in parliament need to be Nazi.

Related paradox: If voters were asked to choose between two parliaments, one of them proportional, the other 100% Whig, then a 51%-Whig electorate might well chose the latter by simple majority vote!

On the other hand, without PR, the country also suffers, for example Canada in 2008 voted 6.8% for the Green party, but zero Green MPs were elected because each riding gave more votes to a non-Green. At that time Canada was using a non-PR system of simply electing the plurality-voting winner in each riding. That also amplified this problem due to strategic voting imperatives – it was strategically foolish to vote Green because your vote then would be "wasted" (could not affect the main contenders for your riding's seat). Probably if this strategy conundrum had not existed (which it would not have, if Canada instead had used a suitable better-than-plurality voting system, such as score voting, within each riding) the Greens would have gotten a lot more than 6.8% of the votes. Proportionally speaking based on their 6.8% vote share, the Greens "deserved" 21 seats; and without plurality's strategy-caused distortion they quite likely would have deserved considerably more. In any case, it probably was not beneficial for Canada's environment to shut out all Greens from parliament during that era.

This fundamental conflict causes what physicists have called "frustration." That is, in solid state physics, various materials "want" to crystallize to reach their "ground energy state" but due to geometrical obstacles all the "nice" crystal forms which might have hoped to be optimal, cannot quite exist. So then the material solidifies into a glassy "amorphous" state and is unable to reach the truly optimum ground state essentially because it is too computationally hard to find. (In theory with extremely slow annealing for aeons, it might be able to crystallize.) In some cases physics "frustration" has been modeled with simple mathematical models and then it has been proven mathematically that finding the true energy minimum in those models is an "NP-complete" computational task. (Examples: the "spin glass" model and "Wang tiling problems.")

Our investigation here shows that the same phenomenon happens with "optimum proportional representation." Is there any way to escape from this conclusion of doom?

1.

One escape is that it is computationally

feasible to find the exact optimum winner-committee, if

| 2N | 2 | 4 | 6 | 8 | 10 | 12 | 14 | 16 | 18 | 20 |

|---|---|---|---|---|---|---|---|---|---|---|

| (2N)!/N!2 | 2 | 6 | 20 | 70 | 252 | 924 | 3432 | 12870 | 48620 | 184756 |

| 2N | 22 | 24 | 26 | 28 | 30 |

|---|---|---|---|---|---|

| (2N)!/N!2 | 705432 | 2704156 | 10400600 | 40116600 | 155117520 |

| 2N | 32 | 34 | 36 | 38 | 40 |

|---|---|---|---|---|---|

| (2N)!/N!2 | 601080390 | 2333606220 | 9075135300 | 35345263800 | 137846528820 |

2. Forest Simmons suggests the following brilliantly simple (?) trick for dodging the computational roadblock: ask the candidates, voters, and/or other interested entities (anybody who wants) to suggest winner sets. (An automated web site could accept submissions.) If anybody succeeds in finding a new-record higher quality set, we switch to it. If not, then we just tell the disgruntled candidates "if you lost but think you should have won, it is your own damn fault for not suggesting a better winner set than you did."

3. Another possible escape is that the present discussion suggests the hopelessness only of good quality PR voting systems in which the winners are a function of the votes, and of them alone. PR voting systems, such as asset voting, in which the winner set is not a function purely of the votes, still could have hope.

4. Our final escape hatch is to "cheat" by devising PR notions based on explicit party names, not just purely on the votes for different candidates. But any such system would likely treat independent candidates unfairly and/or would require laws preventing MPs from switching parties (since otherwise they could "game the system"; such laws have been enacted for this reason in, e.g, New Zealand). Both of those seem dangerous.

One particularly concrete and embarrassing kind of PR "frustration" was pointed out by Toby Pereira and we will explain it later. Pereira's problem affects some multiwinner voting systems worse than others – it seems to afflict STV ("single transferable vote") systems particularly severely – but it might be unavoidable. Essentially that is an open question.

But even if we may assume that we have more than enough computational power to perform optimum PR elections... there then would be other problems. Our systems are "optimal" only for honest voters. What if the voters try to "game the system" by voting dishonestly? We have arguments that our "psi PR" systems, and probably every committee-election system that tries to achieve near-optimal results for honest voters, inherently are very vulnerable to strategic voting. The larger the committee we elect, the more vulnerability there generally will be. Also, the more incentive each voter would have to be strategic, because, e.g. in our example at the start with 1.26×1014 winner sets to consider, obviously at least half of these sets must have quality-value lying within about 10-13 of some other set's quality (in units such that the quality range has width 1). Therefore in an election with a mere 104-109 voters, any particular voter would quite plausibly be able to alter the election result singlehandedly. This is in contrast to large single-winner elections where almost all of the time, all voters are individually powerless.

The conclusion of all these thoughts is simple: avoid multiwinner elections that elect large committees. Only elect small committees, or (most simply) single winners. Elections of large committees, even with our optimal methods, inherently suffer major problems related to both strategic voting, and computational complexity. If you are going to elect a small committee, then our "Pereira-transformed Ψ voting" – aka "harmonic voting" – scheme with Δ=1/2 seems the best method currently known that is completely based on the votes. (Asset voting is another approach in which the candidates, not just the voters, play roles.)

Let C=#candidates, V=#voters, W=#winners, 0<W<C<V, and let Sv,c be the real-valued score given by voter v to candidate c on her ballot. Then here is our highly general class of quality measures Q:

where A1, A2, A3, ... are real numbers specified by the voting system designer, and F(x) is a function also specified by the voting system designer.

I call these quality function "linear" because the innermost sum is a linear function of the scores on the ballots. "Nonlinear" quality functions will be studied in a different paper.

Obviously, if the real number F(x) is regarded as computable in a constant amount of time from its real number input x, and if the W winners all are known (and all the scores Sv,c), then Q can be computed in time O(VWlogW) by sorting the W winners by score as regarded by each voter, and computing the sums. Hence, the best W-element winner-set (maximizing Q) can be computed in polynomial(V,C) time if C is bounded, by just brute force examination of every possible W-element winner-subset.

Our definition of "proportional representation" (PR): if all voters and candidates are different "colors" and each voter scores each same-color candidate 1, rest 0, then the proportion of color-X winners must come out the same as the proportion of color-X voters, for all X, up to at most constant additive errors in #winners (provided enough candidates of each color are running – obviously you cannot have 3 red winners if only 2 reds run).

Examples:

But those were only two special cases. More generally, we can assure PR by choosing the Aj>0 and F() such that

to good enough approximation, where again K1,K2,Δ are any real constants with K2>0 and Δ>0. It seems intuitively preferable for the Aj to be positive and nonincreasing with j and F(x) to be increasing with x, so below I'll only give examples obeying those.

Then an infinite number of such examples include:

This class of quality functions is a large generalization of an idea of Forest Simmons yielding a class of PR voting systems we had called "logarithmic penalty voting." The reason this works – i.e. yields PR – is that if some "red" winner were replaced by a "blue" winner in a PR party-line-voting situation, then, essentially, that is favored (increases Q) if and only if the blue/red voter ratio exceeds the blue/red winner ratio. This happens essentially because of the property Ψ(x+1)=Ψ(x)+1/x of the psi function. The constants K1, K2, Δ give us a certain bounded amount of control over the precise proportionality guarantee, but essentially PR should be accurate to within an additive constant number of winners provided enough candidates of each color run.

To look at that in slow motion, consider example A (harmonic voting).

Suppose there are R currently-winning red candidates and G

currently-winning green candidates.

Suppose there are r red voters and g green voters.

The summands within Q affected

by electing one particular green or one particular red candidate, neither of

whom has been elected yet

(we ignore all the others, since their contributions will stay fixed for our purpose)

would be r/(R+1+K) or g/(G+1+K) respectively.

So depending on which is greater, evidently the new red, or the new green, will be elected

next to maximize Q. So any winner-set maximizing Q automatically must obey

We similarly can consider

example B

above. The exact same reasoning (which also

works for the general case) shows that if Q is maximum then

An argument can be made that one quality function, among the infinite number defined above, is the "best" one, or anyhow better than others.

Namely, I suggest that the psi-function-based schemes in "example B" above – succinct definition in appendix – are best – the "optimal optimal" system – for several reasons:

If a voter increases her score for X, while leaving all other scores (for candidates other than X) on her ballot unaltered, that will increase the Q's for all X-containing committees while leaving the Q's for all X-omitting committees unaltered. [Same sentence also true with all instances of "increase" changed to "decrease."]This property (and the next) actually is true for all election systems of our class (provided the Aj are positive and decreasing and F is increasing), not just the Psi system from example B. In contrast, many other PR voting systems, such as STV (single transferable vote, currently used in Ireland, Australia, and Malta) are nonmonotonic, e.g. a voter by decreasing X's score on her ballot, can actually cause X to win.

Woodall free ridership (Woodall 1983) is a very serious flaw in a voting system because when it backfires due to too many voters adopting this strategy, the result not only is the failure to elect some good candidate such as X, but also the election of a very bad candidate such as B.

Brian Meek proposed (Meek 1969-70, Hill et al 1987; STV systems generally are reviewed by Tideman 1995) a very complicated variant form of STV voting (requiring a computer) which enjoyed immunity to Woodall free ridership. A variant by Hugh Warren also does. However, Britain's electoral reform society (ERS) eventually decided to recommend plain Hare/Droop STV in spite of this superior property of Meek-STV, because it considered Meek's & Warren's variants too complicated.

My own RRV system is both simpler than STV and immune to Woodall free ridership because it has no "elimination" steps.

Among the Psi-PR voting systems involving a quality function based on the function

But now a genuine question arises: what is the best choice of Δ?

First, I believe that the interval ½≤Δ≤1 contains the right answer, and is the only defensible range based on the mathematics within the proportionality proof above. Second, roughly speaking,

I believe that the latter are superior. My reason is that large political parties tend to have advantages in real world political campaigns, that threaten to cause them to over-dominate the scene above and beyond what they "really" proportionately "deserve." Webster/Sainte-Laguë tries to counteract that, but (I conjecture) it does not fully succeed – in the sense that, I suspect, in the real world, large parties still will tend to have advantages despite Webster/Sainte-Laguë. Anyhow Webster is not terribly favoring to the smalls, since it actually is "limit unbiased" (Janson 2014), and since computer simulations of USA-apportionment indicate that even Webster actually still has a small pro-big bias, so that indeed one theoretical model would actually claim the best Δ value should actually be slightly smaller than ½, such as 0.498.

| Δ | Country | Date | Party seat-fractions |

|---|---|---|---|

| 1/3 | Denmark | June 2014 | 26.9%, 21.1%, 19.4%, 8.0%, 7.4%, 5.1%, 4.6%, 4.0%, 3.4% |

| 1/2 (Sainte-Laguë) | Latvia | Oct. 2014 | 24%, 23%, 21%, 17%, 8%, 7% |

| 1/2 (Sainte-Laguë) | Sweden | Sep. 2014 | 32.4%, 24.1%, 14.0%, 7.2%, 6.3%, 6.0%, 5.4%, 4.6% |

| 1/2 (Sainte-Laguë) | Norway | Sep. 2013 | 32.5%, 28.4%, 17.2%, 5.9%, 5.9%, 5.3%, 4.1%, 0.6% |

| 1 (d'Hondt) | Finland | Apr. 2015 | 24.5%, 19.0%, 18.5%, 17.0%, 7.5%, 6.0%, 4.5%, 2.5%, 0.5% |

| 1 (d'Hondt) | Austria | Sep. 2013 | 28.4%, 25.7%, 21.9%, 13.1%, 6.0%, 4.9% |

| 1 (d'Hondt) | Portugal | Oct. 2014 | 44.3%, 37.4%, 8.3%, 7.4%, 2.2%, 0.4% |

| 1 (d'Hondt) | Cambodia | July 2013 | 55.3%, 44.7% |

| 1 (d'Hondt) | Turkey | Nov. 2015 | 57.6%, 24.4%, 10.7%, 7.3% |

| 2 (Imperiali) | Ecuador | Mar. 2013 | 73.0%, 8.0%, 4.4%, 3.6%, 3.6%, 3.6%, 2.2%, 0.7%, 0.7% |

In Sweden's 2014 election using Sainte-Laguë party-list PR, 8 parties won seats, but over 56% of the seats went to the top two parties. Evidently, these two parties are not frantically trying to fission into smaller fragments in order to "take advantage" of any alleged theoretical "benefits" of fissioning conveyed by Sainte-Laguë. Similar but stronger remarks could be made about Norway 2013. Meanwhile in Turkey, which uses d'Hondt, only 4 parties won seats in 2015, with the top party taking 57.6% of the seats all by itself, and the next party 24.4%. Denmark actually "goes beyond Sainte-Laguë" to try to favor party-fissioning even more, corresponding to Δ=1/3, and apparently (based on comparing their 2014 election results versus Sweden's & Norway's) that worked. Meanwhile, Ecuador goes in the other direction ("beyond d'Hondt") to try to favor party-fusion even more than d'Hondt does – this would correspond to Δ=2 for us – and that also apparently worked, based on their 2013 election results, which gave 73% of the seats to the top party. Of these countries, it looks to me that the Sainte-Laguë parliaments presently are the healthiest. (Data.)

If my conjecture is correct, then it would follow that Δ=1/2 is the best choice, yielding the "best of the best of the optimal" systems.

Forest Simmons then told me both he and S.J.Brams both had reached this same conjectural conclusion of the optimality of Δ=½. Indeed, see Benoit 2000 for analysis of several thousand real-world elections again concluding (unmodified) Sainte-Laguë yields the best proportionality of all the roundoff methods he considered. Then Simmons went a bit further to propose the especial elegance of also then choosing K1 and K2 to yield

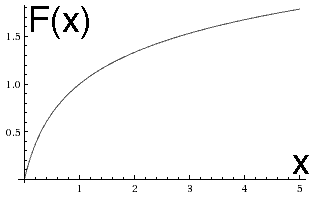

The point of this translation and scaling is that it causes F(0)=0 and F(1)=1, and

the shift-by-1 recurrence becomes

Why we say Δ=½ is like "Sainte-Laguë" and Δ=1 like "d'Hondt":

For "party list voting," we quote wikipedia's definition of the Sainte-Laguë method:

After all the votes have been tallied, successive quotients are calculated for each party. The formula for the quotient isquotient = V / (2S+1) where:Whichever party has the highest quotient gets the next seat allocated, and their quotient is recalculated given their new seat total. The process is repeated until all seats have been allocated.

- V is the total number of votes that party received, and

- S is the number of seats that party has been allocated so far, initially 0 for all parties.

D'Hondt is the same except with quotient=V/(S+1). More generally for "classical divisor methods" quotient=KV/(S+Δ) where the value of K>0 does not matter.

A quite different, but known to be equivalent, way of viewing d'Hondt and Sainte-Laguë: If party j has Vj votes, then the number of seats they deserve is Round(VjC), where Round is the round to nearest integer function for Sainte-Laguë, and is the floor (downward rounding) function for d'Hondt, and C>0 is any real number which causes the correct total number of seats (summed over all parties) to be gotten.

To examine the connection between those classical methods and Ψ(x+Δ) voting, let us first suppose (to make everything maximally simple) that there are exactly two political parties – Red and Blue – and that each voter is maximally partisan, i.e. scoring her party 1 and the other 0; and that we are electing exactly W=2 seats. Suppose a fraction Y≥½ of the voters are Red. Then all divisor methods give the first seat to the Reds. The only question is which party gets the second seat. The answer is the Reds also win the second seat if Y is above some threshold, but the Blues win it if Y is below that threshold.

| System | Threshold |

|---|---|

| Sainte-Laguë, Δ=½ | 3/4 |

| d'Hondt, Δ=1 | 2/3 |

| General Δ | (Δ+1)/(2Δ+1) |

Now, the proof of the theorem that exactly these same thresholds happen with Ψ(x+Δ) voting as with the classical party-list divisor methods (in this simple 2-seat, 2-party scenario) is that

are exactly equal, if and only if, Y lies precisely at threshold: Y=(Δ+1)/(2Δ+1). (And yes, this is a true identity obeyed by the Ψ function.)

Now let us examine a more general scenario. Suppose using a classical divisor method,

with exactly two parties Red and Blue, maximally-partisan voters,

that the Reds have won R and the Blues B seats,

and exactly one more seat must be filled. Who wins it?

With classical divisor methods, the answer again is that if the Red voter fraction Y

exceeds a threshold, then the Reds win it. The formula for the threshold is

with solution

which can be rewritten with the aid of the Ψ(x+1)=Ψ(x)+1/x identity as

which is the same formula. This proves that the analogy between Ψ(x+Δ) voting and classical divisor methods with divisor=Δ, is quite a good analogy.

A second way in which Δ=½ can be claimed to yield Sainte-Laguë style proportionality – I won't explain the details of why here, but see the second paper in this series' discussion of "logarithmic" voting – arises from the fact that the asymptotic series

has zero x-1 term (indeed, all odd-power terms x-3, x-5,... vanish) exactly when Δ=½. This causes Sainte-Laguë like behavior for parties with large numbers of voters and seats.

A myth. It was suggested to me that, if all voters provided "approval style" scores (all scores either 0 or 1 with no intermediate values such as ½) then psi-voting would become identical to RRV. Although this suggestion is correct in simple "colored voters & candidates, plus universally-approved uncolored candidates" scenarios with "maximally racist" voters, overall it is wrong unless P=NP (and even then, it still would be wrong due to specific counterexample elections).

The "Kotze-Pereira transform" is as follows. Replace any ballot which rates the C candidates with scores

by these C weighted approval ballots

(1,1,1,...,1,1) with weight SC

(1,1,1,...,1,0) with weight SC-1-SC

...

(1,1,0,...,0,0) with weight S2-S3

(1,0,0,...,0,0) with weight S1-S2.

Note: the candidates were ordered by decreasing scores on the ballot under consideration. That assures that all the weights come out positive. For example the score ballot (9,5,3) in a three-candidate election would be replaced by

and hence the score ballot (5,9,3) by

This "replacement of score ballots with weighted approval ballots" idea was invented by Toby Pereira.

Theorem. The Kotze-Pereira transform converts the psi voting system in example B, into the inequivalent harmonic voting system of example A (both systems' Q-formulas were stated in the Abstract).

This is interesting for several reasons:

In view of that, I think we should consider harmonic voting superior to psi voting, at least when the number of candidates is small enough for computational feasibility.

It appears that in a lot of multiwinner systems like ours (whose goal is to "elect a committee"), a natural kind of strategic voting is as follows. (Here I'll assume the voter has infinite computational resources, or anyhow large enough to be able to compute this strategy or at least guess something plausibly similar to it.)

This tries to maximize your ballot's impact on deciding between committees A and B. Observe that with large committees, this kind of voting becomes highly likely to be highly dishonest! And the larger the committee-size W, the more plausible ways there are to vote strategically dishonestly. If a lot of voters behaved this way, that could be devastatingly harmful!

This suggests to me that (even without worrying about computational obstacles, for which see next section) all voting schemes striving to elect committees 'optimally' or nearly are inherently in deep trouble when those committees become large. This objection pertains to all of our proposals here, as well as such previous proposals as STV and RRV. If so, then single-winner representation (e.g. each district elects a single MP using a single-winner voting system), perhaps with a small number of "top ups" to approach PR, is a considerably safer approach in the presence of strategic voters. Or: if one does insist on using multiwinner voting systems, then only elect small numbers of winners, e.g. each district electing exactly 3 MPs.

Optimists might hope we might be saved from this fate because voters might feel too stupid to apply such strategies – and hence would, as a last resort, vote honestly. Indeed, this hope has been stated by STV advocates (e.g. the reliably evidence-ignoring STV propagandists and pseudo-scientists from "FairVote," as well as the more scholarly Barber 2000) based mainly on intuition with little or no evidence. (Although see Van der Straeten et al 2010 for some experiments with mild relevance to this which might be regarded as mildly supporting the view of the optimists.) But I claim those optimists are wrong:

When faced with experimental evidence of strategic voting dating back to 1920 reported in real-world elections by the foremost STV proponents of their time, I recommend admitting that strategic voting indeed does happen with STV – although it usually takes more than one election before the voters start using these strategies in large numbers, which is why the Van der Straeten et al 2010 study was irrelevant. Incidentally, Van der Straeten did not cite any of our actual-historical-evidence papers, proving the adage that "those unaware of history are doomed to repeat it."

In any case, the next section will show that small committees will be forced upon us by computational limitations, if we seek optimal ones. Also, in many applications it would be considered inhumane to make voters vote in races containing more than 25 candidates. In that case elections with W≥4 winners would probably be inhumane, since in Canada's 1993 election, an average of 7.3 candidates competed for each single-winner MP seat, so that presumably 21.9 would have competed if Canada had instead employed 3-winner elections in 3× larger ridings, and there would have been 29.2 candidates with 4-winner elections. Admittedly I chose 1993 for this example because it featured the most candidates per seat among Canada's 10 federal elections 1980-2015; but even the election of 1984, which had the fewest at only 5.14 candidates per seat, still would exceed our inhumanity threshold with 25.7 candidates running on average if 5-winner ridings were used. And that 25.7 pertains for the average 5×riding; the extreme cases would be even worse.

Barber 2000 says that Irish Dial seats are elected in W-winner districts (using an STV PR system) where originally 3≤W≤9 but over time the larger-W districts were eliminated so that now 3≤W≤5 in all cases, mostly 3s. According to her Ireland has developed "quite stable 3-party domination" (Fine Gael, Labour, and Fianna Fail) although as of Sep. 2015 nine parties held nonzero numbers of seats plus 11% of the seats are independent (not party-affiliated) MPs.

The Australian Federal Senate also is elected via an STV PR system in which each of the six states elects 12 winners, plus each of the two mainland territories elects 2, for 76=12×6+2×2 seats in all. During 2014-2017 nonzero numbers of seats were held by 10 parties (with zero independent MPs holding seats), but only the top three parties (LibNat, Labor, and Greens) were of any importance, i.e. only those held more than 3 seats.

Remarkable polynomial time convex programming theorem – which fails. Suppose the Aj≥0 are nonincreasing with j≥1 and that F(x) is increasing and concave-∩ for all real x>0. Let ej be the "electedness" of candidate j, that is ej=0 if j loses and ej=1 if j wins. Let

Then it would be great if this Q were a concave-∩ function of the ej. In that case the problem of maximizing Q subject to 0≤ej≤1 and ∑1≤j≤C ej=W would be a "convex programming problem" in C-dimensional space and hence soluble (to determine the optimal vector of ej's to D decimal places of accuracy) in polynomial(C,D,V) time.

But that doesn't happen:

It is certainly possible to design F(x) and the Aj to make case i or ii happen. But neither happens, and neither can happen, for any F and Aj corresponding to reasonable voting systems, especially any obeying our PR condition above. (Think until you realize this.) So, sadly, we generally instead expect Q to be a sum of a large number of terms many of which are going to fail to be nice concave-∩ functions in the C-dimensional space. I.e, a mess.

Nobody currently knows how to efficiently find the global maximum of messy functions in C-dimensional space when C is large, and there are many known cases where this has been proved to be "NP-complete." For example, maximizing a general quadratic polynomial in a hypercube is NP-complete ("quadratic programming"). Indeed, even if the quadratic has only one negative eigenvalue, the minimization problem is still NP-hard [Pardalos & Vavasis 1991]. And Bellare & Rogaway 1995 showed even approximately solving quadratic programs is impossible (unless NP=quasiP).

[A book discussing convex programming and its polynomiality is Grötschel, Lovasz, Schrivjer 1988. A book on NP-completeness Garey & Johnson 1979.]

Furthermore, even if that failed theorem had succeeded brilliantly, then the optimal vector of ej's arising from that theorem might not consist of exactly W values 1 and exactly C-W values 0. It instead might contain values within the interval (0,1) – "fractional winners." We still would have no known efficient way to find the optimum 01 solution with only full-winners and full-losers.

And indeed "01 integer programming" is known to be NP-complete even in the simplest case where the function to be maximized is linear subject to linear inequality constraints. Indeed, re wikipedia's linked proof of that by simply noting that the "minimum vertex cover" problem for a graph is an 01 integer program: this also can be regarded as an optimum multiwinner voting problem, and proves solving it is NP-complete. And indeed minimum vertex cover is "APX-complete," a stronger unsolvability statement indicating that even approximating the min is not possible in polynomial time (unless P=NP) to within approximation factors arbitrarily near 1. (More on this soon.)

So far, we have merely offered heuristic reasons to suspect that optimum-PR might be NP-hard. But in fact, we can prove that by cooking up a set of artificial elections in which the optimum winner set is both completely clear, and NP-complete to find. We employ another classic NP-complete problem regardable as a multiwinner voting problem:

Exact cover by 3-sets (X3C): Given a set V, with |V|=3q (i.e. the size of V is a multiple of 3), and a collection C of 3-element subsets of V. Question: does there exist a subset W of C such that each element of V occurs in exactly one member of W? (Then, |W|=q and W is an "exact cover" of V.)

Regard C as the set of candidates in an election, each of whom "satisfies" exactly 3 voters. The question then is equivalent to whether a set W of q winning candidates can exist providing "perfect representation," i.e. causing each voter to be satisfied.

Hardness Theorem I: It is NP-hard to find such a "perfect PR" winner-set, or even to decide whether one exists.

Further remarks: X3C is known to remain NP-complete even if the input is restricted such that each element of V appears in exactly three triples and indeed even if, further, no two of the input triples share more than a single element. X3C originally was proved NP-complete by Karp in 1972 and is discussed by Garey & Johnson on pages 53 and 221.

An even better impossibility result arises from the following problem, for regular graphs:

Minimum vertex cover: Given a graph G (set of vertices and edges): find a min-cardinality subset of the vertices, that "covers" every edge, i.e. such that each G-edge has at least one endpoint in the set.

This is a well known NP-hard optimization problem. There is an incredibly simple and fast "greedy" method which is guaranteed to get within a factor 2 of optimum:

While(graph still has edges){}

- Pick an edge (u,v) of the graph.

- Add both its endpoints to the vertex-cover set.

- Remove all edges incident to either of those two endpoints, from the graph.

(The reason this assures factor≤2 approximation: every vertex cover must have at least one of the two vertices chosen in each round.) But despite much effort nobody has managed to provably decrease "2," even to 1.99. It is conjectured that is because doing so is impossible. Dinur & Safra 2005 (building on Arora et al 1998, Bar-Yehuda & Even 1985, Berge 1978, Charikar 2002, Crescenzi et al 2001) showed guaranteeing approximation factor≤59/(21+10√5)≈1.3606... is NP-complete. Feige 2003 showed this remains true even if we restrict attention to "regular" graphs, i.e. in which every vertex has equal valency. (Experimentally, however, Asgeirsson & Stein 2005 were able to achieve approximation factors≤1.5 on every instance in a large collection of test graphs.) Also, Khot & Regev 2008 showed guaranteeing approximation factor≤2-ε was NP-complete (for any ε>0) provided Khot's unique games conjecture (UGC) is true. As Klarreich 2011 makes clear, UGC is now playing a fairly central role in computational complexity theory. It won its inventor, Subhash Khot, the 2014 Rolf Nevanlinna Prize, and a result of astonishingly grand scope by Ragavendra 2008 and Ragavendra & Steurer 2009 indicates that if true, the UGC tells us the exact best-achievable approximation factor for every "constraint satisfaction problem." Hence many smart people have worked on the UGC over the 14 years of its existence, so far without proving or disproving it. I personally take no stance on whether UGC is true, but it is clear that deciding whether it is true, is difficult.

Hardness Theorem II: With approval-style voting, finding a min-cardinality parliament such that each MP is approved by at least 1 voter, is NP-hard, and it also is NP-hard to get within 1.3606 factor of minimum, and under the unique games conjecture it is NP-hard to get within factor 2-ε. This all pertains to elections where each voter approves exactly 2 candidates, and each candidate is approved by a number N of voters not depending on the candidate.

Therefore, forget about "sequential" or "heuristic" polynomial-time algorithms hopefully producing approximately-optimum PR, unless you are willing to settle for factor>1.36 worse-than-optimum results in at least some elections – and unless you either

Zuckerman 1996 showed that "constrained max set cover" (CMSC) cannot be approximated to within a factor Nε (for some ε>0) by any polynomial(N)-time algorithm, unless P=NP. Further, CMSC is "absurdly inapproximable" under an assumption NP-complete problems are not solvable in slightly-superpolynomial time by randomized machines, meaning even its k-fold iterated logarithm cannot be approximated to within some constant factor c (for any fixed k≥0, and some c depending on k).

Constrained max set cover (CMSC): Given a family S1, S2, ..., SN of N finite sets, and a subset T of {1,2,3,...,N}, and an integer W with 0<W<N such that the union of some W of the sets equals the union of all of them (those W "cover"), find W of the Si which (a) cover, and (b) whose indices i include the maximum possible number of entries from T.

This can be regarded as a proportional representation problem as follows. Let set Si be the voters who "like" candidate i. A W-element set cover is a set of W candidates such that every voter likes at least one of them. Also, in addition to liking and disliking candidates, let us now suppose it is possible to give candidates an intermediate score (a 3-level scoring system), and let T be the set of "acceptable" candidates who each are universally considered good enough to get at least that intermediate score. Given that we know that W-member "covering" parliaments exist,

Hardness Theorem III: The question of finding a W-member parliament which (a) covers, i.e. each voter likes at least one MP, and (b) contains the maximum possible number of "acceptable" MPs, is absurdly inapproximable in the same sense as Zuckerman 1996's theorem about CMSC.

This makes the point even more strongly that good PR election performance cannot be obtained by polynomial-time algorithms (unless the entire computer science community is very wrong about P and NP). Therefore, good performance in C-candidate elections presumably is only attainable by brute force computations having exponential(C) time-complexity.

Finally, let us note that I am not the only one who has complained about intractability for PR voting. Procaccia et al 2008 gave NP-completeness proofs showing "that winner selection in two previously proposed proportional representation voting systems (one by Monroe and Pothoff & Brams, the other by Chamberlin & Courant) both are computationally intractable problems, implying that these systems are impractical when the assembly is large." These two previous proposals both had required solving large "integer programs."

But even if we ignore computational complexity (assume we have infinitely powerful computers and can afford to maximize any Q we want) we'd still face another issue. None of our example Q's, and apparently no quality function of our PR class, satisfies Toby Pereira's "Universal-Liking Condition (ULC):

ULC: After universally-liked candidates win, then there must be proportionality about the remaining winners.

So I think there is a theorem lurking here (conjecture for present) that no quality function of our quite-wide-seeming "linear" class, is capable of both PR and ULC.

We also could consider several more-refined forms of ULC, all of which appear to be failed by all election methods arising from maximizing the present paper's kind of quality function.

CRC (common rating condition): If all voters are colored, and all candidates are either colored or uncolored; all voters rate candidates of their same color 1 but rate all candidates of other colors 0; and uncolored candidates always are rated with a score that depends on the candidate alone and not on the voter; then: the elected parliament should exhibit the same color-composition as the electorate among colored-MPs only.

SFC (sub-faction condition): After candidates universally liked among Pink voters only win, then the subfactions of the Pinks should then get proportional representation about all additional Pink winners.

In another paper I constructed voting methods based on score-style ballots and "nonlinear" quality functions which satisfy ULC and CRC. Unfortunately, my nonlinearity "cure" was worse than the disease in the sense that those methods all failed – while the present papoer's psi and harmonic voting methods both satisfy

Monotonicity: Voters, by raising the score of a candidate X, should help him win. In particular, if parliament A has greater or equal scores (MP-wise) on each ballot than parliament B, with some greater, then B should not win.

Proof that psi and harmonic voting both satisfy monotonicity: The quality function Q increases for all X-containing parliaments, while the Q's for X-omitting parliaments remain unaltered.

Participation: A voter by honestly voting, cannot worsen the result of the election, where "worsen" means "decrease Q." In particular, by voting, you cannot cause X to be elected instead of Y (with all other winners staying the same) if you scored Y higher than X.

Proof that psi and harmonic voting both satisfy participation: The vth summand in the Psi (or harmonic) quality function, i.e. the summand controlled by voter-v's ballot, always "pulls in the correct direction," i.e. increasing Q for parliaments that voter-v thinks have greater Q versus parliaments that voter-v considers to have lesser Q.

The sum structure of the psi quality function formula, i.e. as a sum, over voters v, of the parliament-quality in the view of that voter – which summand depends for any given parliament on that voter's ballot alone – guarantees the participation property. Other-structured Q-formulas cannot say that.

>p> In contrast, STV systems fail that criterion even in the single winner case; and for the RRV (reweighted range voting) system (which satisfies monotonicity and participation in the single-winner case), I had constructed a 140-voter 3-candidate 2-winner election example in which, before you vote, {Z,Y} win, but after your "Y>X>Z" vote, instead {Z,X} win.It is technically possible to satisfy ULC, SFC, and CRC by "cheating" as follows. Observe that our definition of "PR" (and of ULC, etc) all are silly in the sense that they only apply when voters & candidates are split into exactly-disjoint "colored" factions; and Pereira's definition of "universally-liked" also is silly. Here "silly" means that, in reality, these requirements would essentially never be exactly satisfied. The idea is to exploit/abuse that silliness because the silly situations where these definitions apply do not overlap and hence cannot contradict. So insert special rules into the voting system design that detect all such situations and respond to them appropriately. Then, what the voting method does in the vast bulk of realistic (non-silly) situations technically is irrelevant – as far as satisfying these conditons is technically concerned – so do anything, we do not care what.

But the kind of quality measure Q we've proposed in the present paper does not exploit this silliness and hence cannot particularly abuse it, and then we conjecturally get a contradiction & impossibility. An attempt to formalize the notion of "not cheating" would be the

Continuity condition: The voting method must be expressible in such a way that each possible W-winner set has a real-valued "quality" which is a continuous and smooth function of the scores on the ballots (which with monotonicity may be demanded to be a monotonic function of any one score varied in isolation), and such that the maximum-quality parliament is the one that wins.

Which leads us to the open question: is it even possible for any quality measure, or any voting system, to satisfy all these criteria simultaneously?

The continuity condition makes it plausible that the impossibility of satisfying all these conditions (if it is impossible) is topological.

Forest Simmons also points out our quality functions Q could also be used to find "constrained-optimal winner-sets," e.g. if some subset of the W winners were pre-chosen by some other method, then we still could find the Q-maximizing W-winner set among those containing the pre-chosen subset.

Forest Simmons was the original inventor of "logarithmic penalty voting" which can be regarded as the seed from which this all sprang. NYU politics professor Steven J. Brams informed me he agrees with the idea advanced in our strategic voting section that all committee-electing methods which seek optimum PR are inherently severely vulnerable to strategic voting when the committee size becomes large. Bill Gosper invented his brilliant "matrix product path invariance framework" for discovering mathematical identities, of which his amazing 5F4 formula is merely one instance. Toby Pereira invented what I have called the "Kotze-Pereira transform" and pointed out that it improves (at least in his view) the behavior of psi voting on certain election examples; I then realized that it just yields the harmonic voting system in example A. Pereira also suggested the ULC, CRC, and SFC conditions. (We later found out that the idea of the "Kotze-Pereira transform" had earlier – in 2010 – been posted by somebody calling themself "Kotze.")

I only realized this after all this was done (being unable to read Danish...) but Thiele in his 1895 "Om flerfoldsvalg" starting p.424 actually more or less stated our "harmonic voting" and "psi voting" methods in the special case where (1) approval-style ballots are employed (note, in this case Harmonic and Psi voting are identical) and (2) the "d'Hondt" case Δ=1! Thiele then quickly dismissed this idea as computationally infeasible in the pre-computer age, because the number of possible winner-sets could be enormous. Thiele therefore invented (his special case of) RRV as a "greedy" add-one-more-winner-at-a-time approach to approximately maximize QPsi=QHarmonic. That is, RRV always adds the new winner who increases Q the most. (And Thiele was aware that greed could produce suboptimal winner-sets.) Incidentally, Thiele did not call Q a "quality function" but rather the "satisfaction" ("Tilfredshed"). Thiele gave little or no explanation of why he considered this particular Q formula desirable – although the obvious speculation is that it was because he knew at least some of what we know! Thiele also suggested a "reverse greedy" way to remove winners one at a time, removing the one that caused the smallest decrease in Q. One also could, I suppose, imagine interspersed greedy-add and greedy-remove steps.

Milton Abramowitz and Irene A. Stegun (eds.): Handbook of Mathematical Functions, U.S. Government Printing Office 1972 with corrections (first printing June 1964).

Horst Alzer: On some inequalities for the gamma and psi functions, Maths of Computation 66,217 (Jan. 1997) 373-389.

Douglas J. Amy: Online bibliography about proportional representation, https://www.mtholyoke.edu/acad/polit/damy/Bibliography/history_of_pr_in_the_united_stat.htm.

S.Arora, C.Lund, R.Motwani, M.Sudan, M.Szegedy: Proof verification and hardness of approximation problems, JACM 45,3 (1998) 501-555.

Eyjolfur Asgeirsson & Cliff Stein: Vertex Cover Approximations: Experiments and Observations, Proceedings of the 4th International Workshop on Experimental and Efficient Algorithms (WEA 2005) 545-567; Springer Lecture Notes in Computer Science #3503.

Kathleen L. Barber: A right to representation: proportional election systems for the twenty-first century Ohio State University Press, Columbus OH, 2000. JF1071.B37

R. Bar-Yehuda & S. Even: A local ratio theorem for approximating the weighted vertex cover problem, Annals of Discrete Mathematics 25 (1985) 27-45.

Mihir Bellare & Phillip Rogaway: The complexity of approximating a nonlinear program, Mathematical Programming 69, 1 (July 1995) 429-441.

Kenneth Benoit: Which Electoral Formula Is the Most Proportional? A New Look with New Evidence, Political Analysis 8,4 (2000) 381-388.

Claude Berge: Regularizable graphs, Annals Discrete Maths 3 (1978) 11-19.

David M. Bradley: Ramanujan's formula for the logarithmic derivative of the gamma function, Mathematical Proceedings of the Cambridge Philosophical Society 120,3 (Oct. 1996) 391-401.

Moses Charikar: On semidefinite programming relaxations for graph colorings and vertex cover, Proc. 13th Symposium on Discrete Algorithms (SODA 2002) 616-620.

W.J.Cody, A.J.Strecock, H.C.Thacher Jr: Chebyshev approximations of the Psi function, Maths of Computation 27,121 (Jan. 1973) 123-127.

P.Crescenzi, R.Silvestri, L.Trevisan: On weighted versus unweighted versions of combinatorial optimization problems, Information and Computation 167,1 (2001) 10-26

Irit Dinur & Shmuel Safra: On the Hardness of Approximating Minimum Vertex-Cover, Annals of Mathematics 162,1 (2005) 439-485.

Uriel Feige: Vertex cover is hardest to approximate on regular graphs, Technical report MCS 03-15 of the Weizmann Institute, 2003.

Michael R. Garey & David S. Johnson: Computers and Intractability: A Guide to the Theory of NP-Completeness, Freeman 1979.

R. William Gosper: Acceleration of series, MIT artificial intelligence lab memo #304, March 1974.

L.Grötschel, L.Lovasz, A.Schrivjer: The ellipsoid method and its consequences in combinatorial optimization, Springer 1988.

Augustus R. Hatton: The second proportional representation election in Kalamazoo, National Municipal Review 9,2 (Feb. 1920) 84-92. See p.81: "The small number of first choices he received was apparently due to the expectation of a large majority of voters that his re-election was a matter of course..."

I.D.Hill, B.A.Wichmann, D.R.Woodall: Algorithm 123 – Single transferable vote by Meek's method, Computer Journal 30,3 (1987) 277-281.

Aanund Hylland: Proportional representation without party lists, pp.126-153 in Rationality and Institutions: Essays in honour of Knut Midgaard on the occasion of his 60th birthday, February 11, 1991 ed. Raino Malnes & Arild Underda, published 1992 by Universitetsforlaget AS, Oslo. See quote beginning "Both for groups and for individual voters it could be advantageous not to vote for a candidate who is considered certain of winning election, even if that candidate is one's first choice..."

Svante Janson: Asymptotic bias of some election methods, Annals of Operations Research 215,1 (2014) 89-136.

Hans-Heinrich Kairies: Über die logarithmische Ableitung der Gammafunktion, Math. Annalen 184,2 (July 1970) 157-162.

Richard M. Karp: Reducibility among combinatorial problems, pp.85-103 in R.E. Miller & J.W. Thatcher (eds.) Complexity of Computer Computations, Proc. Sympos. on the Complexity of Computer Computations. New York: Plenum 1972.

Subhash Khot & Oded Regev: Vertex cover might be hard to approximate to within 2-ε, Journal of Computer and System Sciences 74,3 (2008) 335-349.

Erica Klarreich: Approximately Hard: The Unique Games Conjecture, A new conjecture has electrified computer scientists, Oct. 2011, Simons Foundation website. Also a later more-popular version The Unique Games Conjecture, A Grand Vision for the Impossible by Thomas Lin & Klarreich (with accompanying video), was published in Quanta Magazine, August 2014.

Donald E. Knuth: Art of computer programming, volume 4 (book not yet published).

Enid Lakeman & James Lambert: Voting in Democracies ???1955, only one edition of the book has the randomized how-to-vote cards strategy story in it, other editions omit it

S.Lewanowicz & S.Paszkowski: An analytic method for convergence acceleration of certain hypergeometric series, Maths. of Computation 64,210 (April 1995) 691-713.

Yudell L. Luke: Rational approximations for the logarithmic derivative of the gamma function, Applicable Analysis 1,1 (1971) 65-73.

Brian L. Meek: Une nouvelle approche du scrutin transferable, Mathematiques et sciences humaines 25 (1969) 13-23, and 29 (1970) 33-39. Also reprinted in Voting Matters 1 (1994) translated into English as A new approach to the single transferable vote, parts I and II. There also is a paper titled "Meek versus Warren" in Voting Matters 20 (2005) contrasting Brian Meek's complicated STV variant versus Hugh Warren's similarly motivated complicated STV variant. Two attempts to explain Meek STV, from the PR society of Australia, are by Hill and Kitchener.

Panos M. Pardalos & Stephen A. Vavasis: Quadratic programming with one negative eigenvalue is NP-hard, J. Global Optimization 1,1 (1991) 15-22.

Lars Edvard Phragmén: Till frågan om en proportionell valmetod [On the Question of a Proportional Election Method], Statsvetenskaplig Tidskrift 2,2 (1899) 87-95. Phragmén also mentions that his method was first described in "Proportionella val" [Proportional Elections], Svenska spörsm&ahat;l 25 (1895) Stockholm.

Ariel D. Procaccia, Jeffrey S. Rosenschein, Aviv Zohar: On the complexity of achieving proportional representation, Social Choice & Welfare 30,3 (April 2008) 353-362.

Feng Qi & Bai-Ni Guo: Sharp inequalities for the psi function and harmonic numbers, Analysis 34,2 (June 2014) 201-208.

Prasad Raghavendra: Optimal Algorithms and Inapproximability Results for Every CSP?, Proceedings of the 40th annual ACM symposium on Theory of computing (STOC 2008) 245-254.

Prasad Raghavendra & David Steurer: How to round any CSP, Proc. Sympos. Foundations of computer science (FOCS 2009).

Karine Van der Straeten, J-F.Laslier, N.Sauger, A.Blais: Strategic, sincere, and heuristic voting under four election rules: an experimental study, Social Choice and Welfare 35,3 (September 2010) 435-472.

Nore B. Tenow: Felaktigheter i de Thieleska valmetoderna [problems with Thiele's methods], Statsvetenskaplig Tidskrift [Swedish state scientific journal published by Fahlbeckska Foundation of Political Science in Lund] (1912) 145-165.

Thorvald N. Thiele: Om flerfoldsvalg [On multiple election], Oversigt over Det Kongelige danske videnskabernes selskabs forhandlinger = Bulletin de L'Académie royale des sciences et des lettres de Danemark, Copenhague Kongelige Danske Videnskabernes Selskab = Royal Danish Academy of Sciences and Letters 3,4 (1895-6) 415-441.

Nicolaus Tideman: The Single Transferable Vote, J. Economic Perspectives 9,1 (winter 1995) 27-38.

Leon Weaver & James L. Blount: Hamilton: PR defeated by its own success, pp.209-240 in Kathleen L. Barber: Proportional Representation and Election Reform in Ohio (with a foreword by John B. Anderson), Ohio State University Press, Columbus OH, 1995. JS451.O35 B37. See p.230 "As in most elections, strategic voting appears to have affected outcomes... over time surpluses of popular candidates seemed to shrink as their supporters learned to give their first-choice votes to candidates less assured of election..."

Douglas R. Woodall: Computer counting in STV elections, Representation 23 (1983) 4-6 [Reprinted in Voting Matters 1 (1994) 11-12.] Second paragraph discusses "Woodall free ridership" strategy.

Douglas R. Woodall: Properties of Preferential Election Rules, Voting Matters 3,4 (Dec. 1994). There also was a later paper by Woodall with considerable overlap with this one: Monotonicity of single-seat preferential election rules, Discrete Applied Mathematics 77 (1997) 81-98.

David Zuckerman: On Unapproximable Versions of NP-Complete Problems, SIAM J. Computing 25,6 (1996) 1293-1304.

Everything is for a V-voter C-candidate W-winner election, 0<W<C, 0<V.

The following pseudocode is based on working C-language code. It employs "algorithm R" from Knuth volume 4's §7.2.1.3 to generate all W-element subsets.

On my 2 GHz single core machine (made in 2006), the runtime is about

By the way, it is rather absurd for voters to be asked to rank-order 110 candidates. Instead about 98% of Australian Senate-voters just regurgitate a pre-defined rank order recommended by one party or another. That moots the whole goal of even having the preference-ordering system. But we'll pretend that our voters really did geneuinely rank-order all 110, in which case...

That would take my computer 1-4 million years via the brute force algorithm below.

Its mission is, from among all W-element subsets of the C candidates, to find the one maximizing

Here Δ is a positive constant pre-chosen by the voting system designer (½≤Δ≤1 is recommended and conjecturally Δ=½ is the best choice), and Ψ(x) is the "psi function" discussed in the preceding appendix.

The indices of the winning candidates are returned in the array winner[0..W-1]. Each such index lies in the integer interval [0, C-1]. The algorithm employs the real array S[0..V-1] and the uint (unsigned integer) array x[0..W], as workspace. The input ballot-data is the array score[0..CV-1] where score[jV+k] is the score awarded to candidate j by voter k, where 0≤k<V and 0≤j<C. It is assumed all these scores have been prescaled to lie within the real interval [0, 1] where 0 is the score a voter would award to a hated candidate and 1 to an excellent candidate. For voters who do not score some candidate, that blank entry of the score array must be filled in with that candidate's average score (among those voters who did score him). In that way the abstaining voters will leave that candidate's average unaffected. The value Q of the quality function for the optimum winner-set, is returned directly.

|

This algorithm should be susceptible to large speedup if we are willing to sacrifice 100% confidence of correctness. The idea is to use random subsets of voters, not the whole set. Then whenever the current voter-subset thinks some some winner-set is unusually good, investigate it using a larger voter-subset... and so on, eventually employing the full voter-set. The point is that low-quality candidate-sets are dismissed from consideration without computing their quality Q exactly; we instead rely on a fast and noisy estimate of their Q, This can be made "rigorous" in the sense that via statistics we can estimate the probability that any such "pruning" decision is going to be erroneous, and design the algorithm to keep those probabilities below some safety threshold. Then you won't be 100% sure your election winner set was right, but you will be 99.99999% sure (or whatever) and save a lot of time; and the algorithm could keep raising its probability estimate over time, thus rapidly reporting election results which, however, might be incorrect, and then more slowly producing more confident results. Note that, for example, if N runs of this algorithm (using independent randomness each time!) all produced the same conclusions, then the failure probability would be raised to the Nth power; this makes it apparent that extremely small failure probabiities are attainable in this fashion.

The natural logarithm is defined by

But if we want to understand the sum

It is possible to define Ψ(x) without any knowledge of calculus via

Characterization: Ψ(x) is the unique real-valued function of positive-real argument x obeying i, ii, and either iii or iv:

Proof: The characterization from i,ii,iii is Satz 1 in Kairies 1970. From i,ii,iv: let Z(x) denote the integral of Ψ(x), which if we cheat we know is Z(x)=lnΓ(x)+C. This integral must exist because Ψ(x) is by i, ii, iv well-behaved enough to integrate. By integrating ii we get that Z(x) obeys Z(x+1)=Z(x)+ln(x). By iv we see that Z(x) is concave-∪. Hence by the Bohr-Mollerup theorem Z(x) is uniquely determined except for the constant of integration C. So by differentiating Z(x) we get that Ψ(x) is uniquely determined. QED.

Tremendous speed in computing the psi function (or anyhow the "F" function) is essential for our voting scheme; trillions of F-evaluations might be made to handle one election. The F implementation is the speed bottleneck, and elections are infeasible to handle if too large.

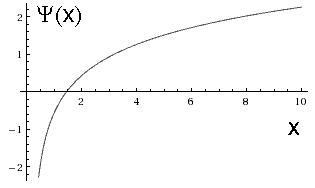

The x-derivative Ψ'(x) obeys Ψ'(x)>0 for all x>0, meaning the psi function is monotone increasing for all x>0 from Ψ(0+)=-∞ to Ψ(∞)=+∞. The second derivative is negative: Ψ"(x)<0 for all x>0, meaning the psi function is concave-∩ for all x>0. Its relation to the "harmonic sum" is that, for integer n≥0,

where -Ψ(1)=γ=0.5772156649...

is the

Euler-Mascheroni

constant. To prove this, it suffices to show the

shift-by-1 recurrence Ψ(n)+1/n=Ψ(n+1),

which arises from Γ(x+1)=xΓ(x) so that

The doubling formula is

There also is a nice relationship to the "odd harmonic sum" (A&S 6.3.4):

Dan Asimov pointed out to me that we can interpolate the following "generalized harmonic sum" by an analytic function:

(Asimov claims he discovered this but then realized it was already known and mentioned somewhere in Whittaker & Watson's Modern Analysis. To prove it expand the first two terms inside the integrand as a terminating power series in x, then integrate it term by term.) The original harmonic sum arises as the special case s=1 and is equivalent to the psi function interpolation. From it we see

which might be useful for evaluating Ψ(x+1) when x≈1 is near some y such

that Ψ(y+1) is already known.

Its integrand

Two other integrals (the first is A&S 6.3.21, the second I derived myself in a parallel manner) are

If either integral is evaluated numerically using N-node Gauss-Laguerre integration (but based on the weight function e-2πu rather than the standard e-u; actually for the second integral one could base it on the weight function ue-2πu instead), then the result is rational approximations of Ψ(x) of the form

where

where the N abscissae xk and weights wk, for N-node Gauss-Laguerre quadrature with standard weight function e-u are given in table 25.9 of Abramowitz & Stegun as well as other sources. Other rational approximations have been stated by Luke, Cody et al, and Lewanowicz & Paszkowski.

The Ψ(x+1) and Ψ(x) and ln(x) functions all are within ±O(x-1) of each other, for large x. Indeed Alzer proved

while Qi & Guo proved

which brackets Ψ(x) between bounds at most ln(2)-γ<0.1156 apart. By using the Euler-Maclaurin summation formula with error bound, one may show

and more generally that, for any particular x>0, any truncation of the divergent asymptotic series

(where the B2n are Bernoulli numbers) yields an approximation whose error is of the same sign as, and less in magnitude than, the first neglected term. Similar remarks apply for

For small x>0, the behavior instead is

where this series converges when |x|<1 and ζ(s)=∑1≤nn-s denotes the Riemann zeta function with

Actually, for anybody wanting to evaluate Ψ(x) within some particular 1-wide

subinterval [B, B+1] of the positive reals, it would be better to base things on

a series expansion of

now with

For example with B=1 we have A=√6-1 and asymptotic |term|-ratio 1/(5+√24)≈0.10102 for the power series and ≈0.05064 for the Chebyshev series.

C.F.Gauss showed that the value of H(x) could be expressed in closed form whenever x=p/q is rational with 0<x<1 (and hence, via the shift-by-1 recurrence, for any rational x):

In particular

R.W.Gosper in 1974 showed how to express H(x) as a different series. His sum terminates for integer x≥0 and "converges faster" than the original (also terminating) sum. For non-integer x Gosper's series does not terminate, but it always converges:

Each summand in Gosper's series is a rational function of x with denominator=j+x and having numerator which is a polynomial(x) of degree=j+1. With a little cleverness, the first N terms in Gosper's series can all be computed in O(N) arithmetic operations in total. In 2015 Gosper, at my request, found this more general identity in less than 1 day:

Here 5F4 denotes a hypergeometric series. Gosper's older formula for H(x) arises from the special case η=1, but notice that η=½ also is nice. This series is a thing of beauty. It converges for all real or complex x and η for which the left hand side is finite.

Here is a simple algorithm

to compute Ψ(x) accurate to within ±10-15

for any x>0 (except that the discreteness of the floating point computer-representable

subset of real numbers prevents any method from achieving that accuracy for very small x).

If one also wanted x<0 (which we, for election purposes, do not)

then one could use the reflection identity

#define EulerMasch 0.57721566490153286061

#define Zeta2 1.64493406684822643647

#define Zeta3 1.20205690315959428540

#define Zeta4 1.08232323371113819152

real Ψ(real x){ // Ψ(x) = Γ'(x)/Γ(x). We ASSUME x>0.

if(x < 0.000287){ return((Zeta2-x*(Zeta3-x*Zeta4))*x-EulerMasch-1.0/x); }

for(y=x, p=0.0; y<14.9; y += 1.0){ p -= 1.0/y; }

r = 0.5/y;

p += ln(y) - r;

r *= r;

p -= r * (1/3.0 - r * (2/15.0 - r * (16/63.0 - r * (16/15.0 - r * 256/33.0))));

return(p);

}

|

More efficient and/or accurate algorithms could be based on Cody et al 1973. Here's a faster ad hoc psi algorithm which has been tested to yield accuracy within ±9×10-12 for all x≥0.0001, and within ±7×10-12 (which ought to be enough accuracy for any country's elections) for all x≥0.0003:

real Ψ(real x){ // Ψ(x) = Γ'(x)/Γ(x). We ASSUME x>0.

real p, y, r;

if(x < 0.00017){ return((Zeta2-Zeta3*x)*x-EulerMasch-1.0/x); }

p = 3.7934×10-12;

for(y=x; y<7.01; y += 1.0){ p = p - 1.0/y; }

r = 0.5/y;

p = p + ln(y) - r;

r = r*r;

p = p - r*((0.24441724*r-0.1333077274)*r+0.33333331683);

return(p);

}

|

This algorithm runs in roughly 240 clock cycles on my machine, on average for random-uniform x in the real interval [0,5].

Regarding the "Simmons function"

and these derivatives

The Gosper 5F4 formula is

The pattern behind the rational sequence is

Working C language code for the algorithms discussed in this paper is available here: /CleanOptPRVote.c.